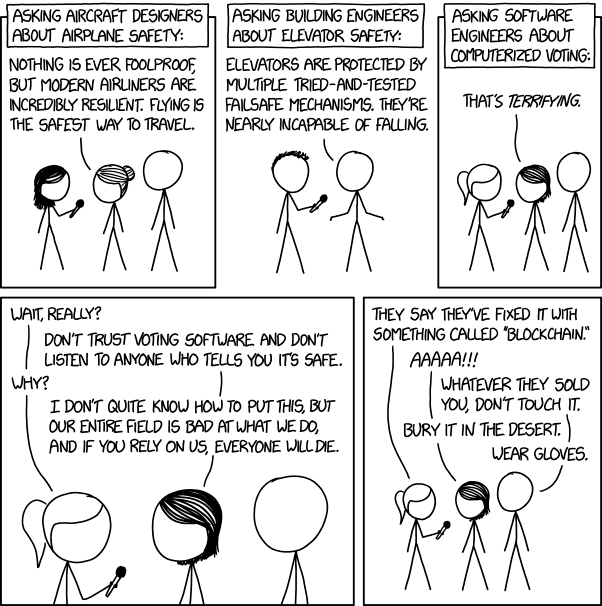

From XKCD, released under the Creative Commons 2.5 License

An app contributed to chaos at last week's 2020 Democratic Iowa Caucus. Hours after the caucus opened, it became obvious that something had gone wrong. No results had been reported yet. Reports surfaced that described technical problems and inconsistencies. The Iowa Democratic Party released a statement declaring that they didn't suffer a cyberattack, but instead had technical difficulties with an app.

A week later, we have a better understanding of what happened. A mobile app was written specifically for the caucus. The app was distributed through beta testing programs instead of the major app stores. Users struggled to install the app via this process. Once installed it had a high risk of becoming unresponsive. Some caucus locations had no internet connectivity, rendering an internet-connected app useless. They had a backup plan: use the same phone lines that the caucus had always used. But the phone lines were clogged by online trolls who jammed the phone lines "for the lulz."

As Tweets containing the words "app" and "problems" made their rounds, software engineers started spreading the above XKCD comic. I did too. One line summarizes the comic (and the sentiment that I saw on Twitter): "I don't quite know how to put this, but our entire field is bad at what we do, and if you rely on us, everyone will die." Software engineers don't literally believe this. But it also rings true. What do we mean?

Here's what we mean: We're decent at building software when the consequences of failure are unimportant. The average piece of software is good enough that it's expected to work. Yet most software is bad enough that bugs don't surprise us. This is no accident. Many common practices in software engineering come from environments where failures can be retried and new features are lucrative. And failure truly is cheap. If any online service provided by the top 10 public companies by market capitalization were completely offline for two hours, it would be forgotten within a week. This premise is driven home in mantras like "Move fast and break things" and "launch and iterate."

And the rewards are tremendous. A small per-user gain is multiplied by millions (or billions!) of users at many web companies. This is lucrative for companies with consumer-facing apps or websites. Implementation is expensive but finite, and distribution is nearly free. The consumer software engineering industry reaches a tradeoff: we reduce our implementation velocity just enough to keep our defect rate low, but not any lower than it has to be.

I'll call this the "website economic model" of software development: When the rewards of implementation are high and the cost of retries is low, management sets incentives to optimize for a high short-term feature velocity. This is reflected in modern project management practices and their implementation, which I will discuss below.

But as I said earlier, "We're decent at building software when the consequences of failure are unimportant." It fails horribly when failure isn't cheap, like in Iowa. Common software engineering practices grew out of the internet economic model, and when the assumptions of that model are violated, software engineers become bad at what we do.

How does software engineering work in web companies?

Let's imagine our hypothetical company: QwertyCo. It's a consumer-facing software company that earns $100 million in revenue per year. We can estimate the size of QwertyCo by comparing it to other companies. WP Engine, a Wordpress hosting site, hit $100 million ARR in 2018. Blue Apron earned $667 million of revenue in 2018. So QwertyCo is a medium-size company. It has between a few dozen and a few hundred engineers and is not public.

First, let's look at the economics of project management at QwertyCo. Executives have learned that you can't decree a feature into existence immediately. There are tradeoffs between software quality, time given, and implementation speed.

How much does software quality matter to them? Not much. If QwertyCo's website is down for 24 hours a year, they'd expect to lose 273,972 dollars total (assuming that uptime linearly correlates with revenue). And anecdotally, the site is often down for 15 minutes and nobody seems to care. If a feature takes the site down, they roll the feature back and try again later. Retries are cheap.

How valuable is a new feature to QwertyCo? Based on my own personal observation, one engineer-month can change an optimized site's revenue in the ballpark of -2% to 1%. That's a monthly chance at $1 million dollars of incremental QwertyCo revenue per engineer. Techniques like A/B testing even mitigate the mistakes: within a few weeks, you can detect negative or neutral changes and delete those features. The bad features don't cost a lot - they last a finite amount of time, and the wins are forever. Small win rates are still lucrative for QwertyCo.

Considering the downside and upside, when should QwertyCo launch a feature? The economics suggest that features should launch even if they're high risk, as long as they occasionally produce revenue wins. Accordingly, every project turns into an optimization game: "How much can be implemented by $date?", "How long does it take to implement $big_project? What if we took out X? What if we took out X and Y? Is there any way that we can make $this_part take less time?"

Now let's examine a software project from the software engineer's perspective.

The software engineer's primary commodity is time. Safe software engineering takes a lot of time. Once projects cross a small complexity threshold, it will have many stages (even if they don't happen as part of an explicit process). It needs to be scoped with the help of a designer or product manager, converted into a technical design or plan if necessary, divided into subtasks if necessary. Then the code is written with tests, the code is reviewed, stats are logged and integrated with dashboards and alerting if necessary, manual testing is performed if necessary. Additionally, coding often has up-front costs known as refactoring: modifying the existing system to make it easier to implement the new feature. Coding could take as little as 10-30% of the time required to implement a "small" feature.

How do engineers lose time? System-wide failures are the most obvious. Site downtime is an all-hands-on-deck situation. The most knowledgeable engineers stop what they are doing to make the site operational again. But time spent firefighting is time they are not adding value. Their projects are now behind schedule, which reflects poorly on them. How can downtime be mitigated? Written tests, monitoring, alerting, and manual testing all reduce the risk that these catastrophic events will happen.

How else do engineers lose time? Through subtle bugs. Some bugs are serious but uncommon. Maybe users lose data if they perform a rare set of actions. When an engineer receives this bug report, they must stop everything and fix the bug. This detracts from their current project, and can be a significant penalty over time.

Accordingly, experienced software engineers become bullish on code quality. They want to validate that code is correct. This is why engineering organizations adopt practices that, on their face, slow down development speed: code review, continuous integration, observability and monitoring, etc. Errors are more expensive the later they are caught, so engineers invest heavily in catching errors early. They also focus on refactorings that make implementation simpler. Simpler implementations are less likely to have bugs.

Thus, management and engineering have opposing perspectives on quality. Management wants the error rate to be high (but low enough), and engineers want the error rate to be low.

How does this feed into project management? Product and engineering split projects into small tasks that encompass the whole project. The project length is a function of the number of tasks and the number of engineers. Most commonly, the project will take too long and it is adjusted by removing features. Then the engineers implement the tasks. Task implementation is often done inside of a "sprint." If the sprint time is two weeks, then every task has an implicit two week timer. Yet tasks often take longer than you think. Engineers make tough prioritization decisions to finish on time: "I can get this done by the end of the sprint if I write basic tests, and if I skip this refactoring I was planning." The sprint process applies a constant downward pressure on time spent, which means that the engineer can either compromise on quality, or admit failure in the sprint planning meeting.

Some will say that I'm being too hard on the sprint process, and they're right. This is really because of time-boxed incentives. The sprint process is just a convenient way to apply time pressure multiple times: once when scoping the entire project, and once for each task. If the product organization is judged by how much value they add to the company, then they will naturally negotiate implementation time with engineers without any extra prodding from management. Engineers are also incentivized to implement quickly, but they might try optimizing for the long-term instead of the short-term. This is why multiple organizations are often given incentives to increase short-term velocity.

So by setting the proper incentive structure, executives get what they wanted at the beginning: they can name a feature and a future date, and product and engineering will naturally negotiate what is necessary to make it happen. "I want you to implement account-free checkouts within 2 months." And product and engineering will write out all of the 2 week tasks, and pare down the list until they can launch something called "account-free checkouts." It will have a moderate risk of breaking, and will likely undergo a few iterations before it's mature. But the breakage is temporary, and the feature is forever.

What happens if the assumptions of the website economic model are violated?

As I said before, "We're decent at building software when the consequences of failure are unimportant." The "launch and iterate" and "move fast and break things" slogans point to this assumption. But we can all imagine situations where a do-over is expensive or impossible. At the extreme end, a building collapse could kill thousands of people and cause billions of dollars in damage. The 2020 Iowa Democratic Caucus is a more mild example. If the caucus fails, everyone will go home at the end of the day. But a party can't run a caucus a second time… not without burning lots of time, money, and goodwill.

Quick note: In this section, I'm going to use "high-risk" as a shorthand for "situations without do-overs" and "situations with expensive do-overs."

What happens when the website economic model is applied to a high-risk situation? Let's pick an example completely at random: you are writing an app for reporting Iowa Caucus results. What steps will you take to write, test, and validate the app?

First, the engineering logistics: you must write both an Android app and an iPhone app. Reporting is a central requirement, so a server is necessary. The convoluted caucus rules must be coded into both the client and the server. The system must report results to an end-user; this is yet another interface that you must code. The Democratic Party probably has validation and reporting requirements that you must write into the app. Also, it'd be really bad if the server went down during the caucus, so you need to write some kind of observability into the system.

Next, how would you validate the app? One option is user testing. You would show hypothetical images of the app to potential users and ask them questions like, "What do you think this screen allows you to do?" and "If you wanted to accomplish $a_thing, what would you tap?". Design always requires iteration, so you can expect several rounds of user testing before your mockups reflect a high-quality app. Big companies often perform several rounds of testing before implementing large features. Sometimes they cancel features based on this feedback, before they ever write a line of code. User testing is cheap. How hard is it to find 5 people to answer questions for 15 minutes for a $5 gift card? The only trick is finding users that are representative of Iowa Caucus volunteers.

Next, you need to verify the end-to-end experience: The app must be installed and set up. The Democratic Party must understand how to retrieve the results. A backup plan will be required in case the app fails. A good test might involve holding a "practice caucus" where a few Iowa Democratic Party operatives download the app and report results on a given date. This can uncover systemic problems or help set expectations. This could also be done in stages as parts of the product are implemented.

Next, the Internet is filled with bad actors. For instance, Russian groups famously ran a disinformation campaign across social media sites like Facebook, Reddit, and Twitter. You will need to ensure that they cannot interfere with the caucus. Can you verify that the results you receive are from Iowa caucusgoers? Also, the Internet is filled with people who will lie and cause damage just for the lulz. Can it withstand Denial of Service attacks? If it can't, do you have a fallback plan? Who is responsible for declaring the fallback plan is in action and communicates that to the caucuses? What happens if individuals hack into the accounts of caucusgoers? If there are not security experts within the company, it's plausible that an app that runs a caucus or election should undergo a full third-party security review.

Next, how do you ensure that there isn't a bug in the software that misreports or misaggregates the results? Relatedly, the Democratic Party should also be suspicious of you: can the Democratic Party be confident of the results even if your company has a bad actor? The results should be auditable with paper backups.

Ok, let's stop enumerating issues. You will require a lot of time and resources to validate that this working.

The maker of the Iowa Caucus app was given $60,000 and 2 months. They had four engineers. $60k doesn't cover salary and benefits for four engineers for two months, especially on top of any business expenses. Money cannot be traded for time. There is little or no outside help.

Let's imagine that you apply the common practice of removing and scoping-down tasks until your timeline makes sense. You will do everything possible to save time. App review frequently takes less than a day, but worst-case it can take a week or be rejected. So let's skip that: the caucus staff will need to download the app through beta testing links. Even if the security review was free, it would take too long to implement all of their recommendations. You're not doing a security review. Maybe you pay a designer $1000 to make app mockups and a logo while you build the server. You will plan to do one round of user testing (and later skip it once engineering timelines slip). Launch and iterate! You can always fix it before the next caucus.

And coding always takes longer than you expect! You will run into roadblocks. First, the caucus' rules will have ambiguities. This always happens when applying a digital solution to an analog world: the real world can handle ambiguity and inconsistency and the digital world cannot. The caucus may issue rule clarifications in response to your questions. This will delay you. The caucus might also change their rules at the last second. This will cause you to change your app very close to the deadline. Next, there are multiple developers, so there will be coordination overhead. Is every coder 100% comfortable with both mobile and server development? Is everyone fully fluent in React Native? JS? Typescript? Client-server communication? The exact frameworks and libraries that you picked? Every "no" will add development time to account for coordination and learning. Is everyone comfortable with the test frameworks that you are using? Just kidding. A few tests were written in the beginning, but the app changed so quickly that the tests were deleted.

Time waits for no one. 2 months are up, and you crash across the finish line in flames.

In the website economic model, crashing across the finish line in flames is good. After all, the flames don't matter, and you crossed the finish line! You can fix your problems within a few weeks and then move to the next project.

But flames matter in the Iowa caucus. As the evening wears on, the Democratic Caucus is fielding calls from people complaining about the app. You get results that are impossible or double-reported. Soon, software engineers are gleefully sharing comics and declaring that the Iowa Caucus never should have paid for an app, and that paper is the only technology that can be trusted for voting.

What did we learn?

This essay helped me develop a personal takeaway: I need to formalize the cost of a redo when planning a project. I've handled this intuitively in the past, but it should be explicit. This formalization makes it easier to determine which tasks cannot be compromised on. This matches my past behavior; I used to work in mobile robotics, which had long implementation cycles and the damage of failure can be high. We spent a lot of time adding observability and making foolproof ways to throttle and terminate out-of-control systems. I've also worked on consumer websites for a decade, where the consequences of failure are lower. I've been more willing to take on short-term debt and push forward in the face of temporary failure, especially when rollback is cheap and data loss isn't likely. After all, I'm incentivized to do this. Our industry also has techniques for teasing out these questions. "Premortems" are one example. I should do more of those.

On the positive side, some people outside of the software engineering profession will learn that sometimes software projects go really badly. People funding political process app development will be asking, "How do we know this won't turn into an Iowa Caucus situation?" for a few years. They might stumble upon some of the literature that attempts to teach non-engineers how to hire engineers. For example, the Department of Defense has a guide called "Detecting Agile BS" (PDF warning) that gives non-engineers tools for detecting red flags when negotiating a contract. Startup forums are filled with non-technical founders who ask for (and receive) advice on hiring engineers.

The software engineering industry learned nothing. The Iowa Caucus gives our industry an opportunity. We could be examining how the assumption of "expensive failure" should change our underlying processes. We will not take this opportunity, and we will not grow from it. The consumer-facing software engineering industry doesn't respond to the risk of failure. In fact, we celebrate our plan to fail. If the outside world is interested in increasing our code quality in specific domains, they should regulate those domains. It wouldn't be the first one: HIPAA and Sarbanes-Oxley are examples of regulations that affect engineering at website economic model companies. Regulation is insufficient, but it may be necessary.

But, yeah. That's what we mean when we say, "I don't quite know how to put this, but our entire field is bad at what we do, and if you rely on us, everyone will die." Our industry's mindset grew in an environment where failure is cheap and we are incentivized to move quickly. Our processes are poorly applied when the cost of a redo is high or a redo is impossible.